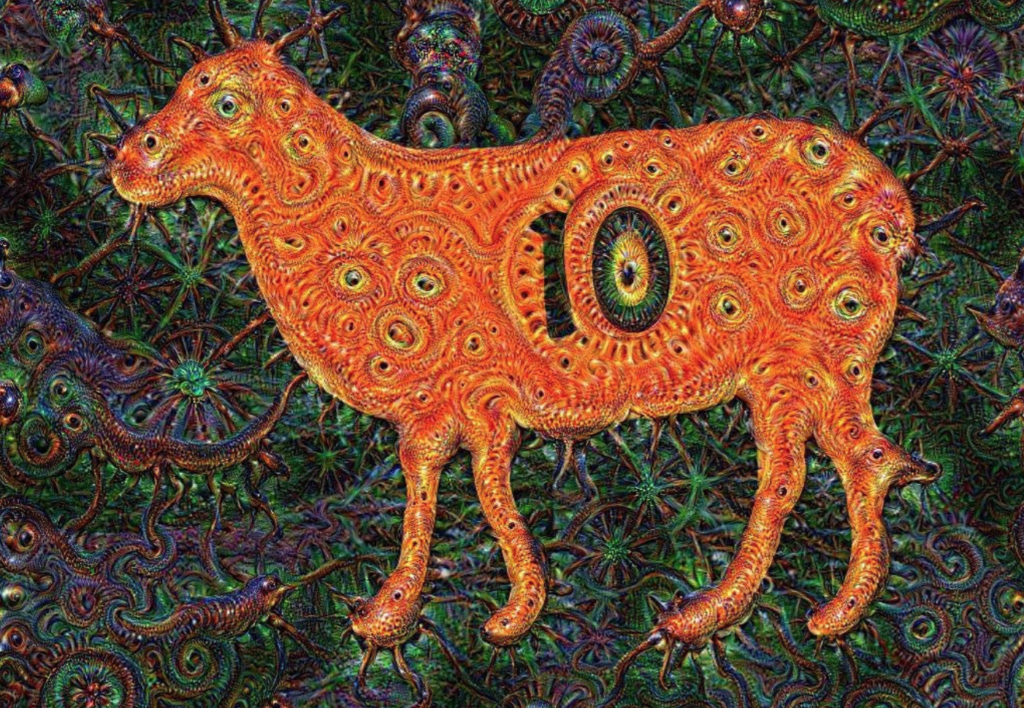

Did you hear? Google has dreams! And they’re really trippy. You’ve got to check it out.

Since Google’s “Deep Dream” project landed with great fanfare onto our collective Twitter feeds, it’s prompted a mountain of online aggregation, analysis, and sharing. And there’s little wonder why. With beautiful/disturbing/uncanny visuals, references to the impressive-sounding “artificial neural networks,” and origins in one of the most fascinating companies in the world, the story is a click farmer’s dream. It’s no surprise that so many publishers rushed to fill the stream with takes on the technology.

Unfortunately, much of the actual information sharing of these pieces – you know, the journalism – has been counterproductive. With click-begging headlines, useless metaphors, vague discussion of essential information, and the general ambient woowoo that chokes our tech media, stories about Deep Dream have demonstrated the capacity for aggregation-style internet journalism to mislead. Faced with an interesting but limited project, one which utilizes complex technologies that require nuance and care to talk about meaningfully, our professional technology writers have fallen down, hard, on the job.

The commons misunderstandings about Deep Dream make this failure evident. Judging by posts I’ve seen on Facebook, Twitter searches, and thousands of comments on dozens of articles, a lot of people believe the following about this story:

- That Google has built a human-like general AI – that is, an artificial intelligence that thinks the way a human does, or

- That Google itself (whether the search engine, the servers, or the company writ large is never clear) somehow constitutes a human-like general AI, and

- This AI has dreams similar to the way human beings have dreams, as something like a conscious mind having something like conscious experiences, which

- Are somehow mined by Google’s software for the images that are being ladled out onto the internet, post by post, by our insatiable content industry.

You may season this narrative with a host of related misunderstandings. The ubiquitous claim that these images are “like being on acid” has added a whole new layer of utterly unfounded claims about this project and what it does. If you poke around a little bit, you’ll find many people on social media who think that these images are the product of altered consciousness – which would be impressive, given that they don’t emerge from a consciousness at all.

Google has kept the wraps pretty tight on the actual processes involved in the Deep Dream project. A friend of mine who’s a science journalist tells me that she was informed by Google that they would be doing no interviews about the project. An explanatory blog post from the Google research team is useful, though it (understandably) fails to reveal some of the specifics about the program. Deep Dream utilizes artificial neural networks, an impressive array of technologies with a name that, unfortunately, seems particularly conducive to creating misunderstanding. These artificial neural networks are machine learning systems, described in a useful Wikipedia article as “non-linear, distributed, parallel and local processing and adaptation” systems. Like most machine learning, these systems are still fundamentally probabilistic – that is, they absorb large amounts of data and use that data to make predictions in order to solve practical tasks, utilizing statistical and algorithmic techniques that are more complicated than I’ll ever be able to understand.

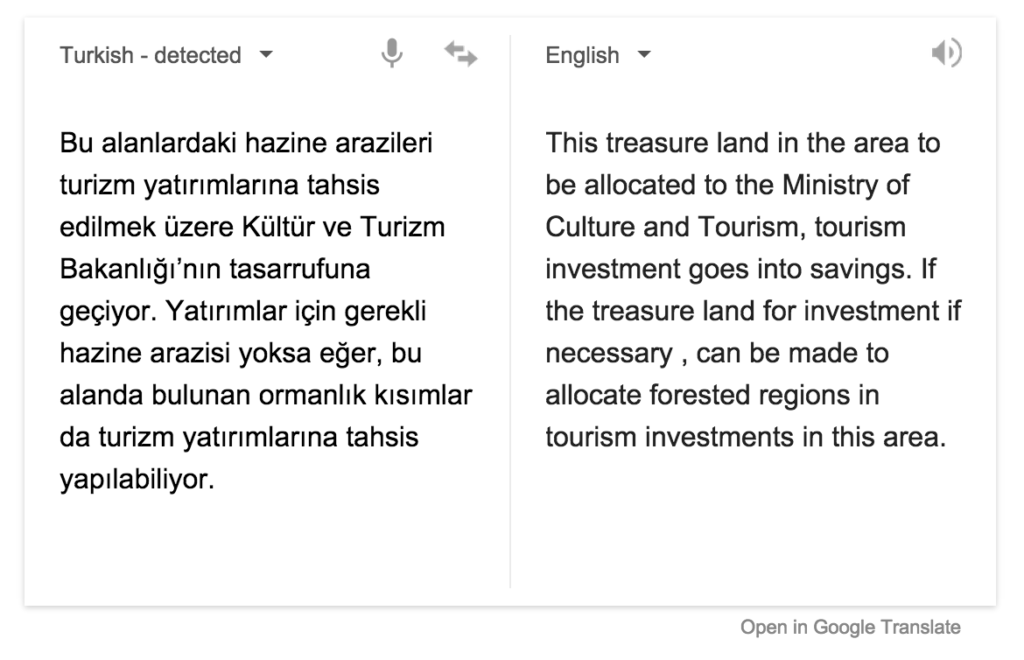

It’s essential to say the following: while artificial neural networks are inspired by animal neurons, they do not function in a substantially similar way to animal neurons. That’s no insult to the developers of these technologies, which are feats of modern engineering – these systems are not intended to function in a substantially similar way to animal neurons. As the relevant Wikipedia article says, “In modern software implementations of artificial neural networks, the approach inspired by biology has been largely abandoned for a more practical approach based on statistics and signal processing.” From Google’s standpoint, that makes perfect sense. Their artificial intelligence guru, Peter Norvig, has become a god in the field largely through approaches that do not attempt to mimic human thinking, but rather churn through huge amounts of data, looking for patterns in order to make chancy decisions. Google Translate demonstrates both the advantages and limitations of probabilistic approaches to AI. By looking at vast numbers of human translations of certain words and phrases, the system makes educated guesses about the best possible translation. The near-instantaneous responses and the breadth of languages is remarkable; the inadequacy of the system to simulate actual natural human communication is sobering.

Google’s image search function operates in something like the same way. Thanks to Google’s massive amounts of data, in which images are matched to captions, titles, or descriptions that have been assigned by humans, the company has the ability to train its artificial neural network to identify images in ways similar to humans, though as with Google Translate, there are occasional wild misidentifications. As the Deep Dream blog post says, “We train an artificial neural network by showing it millions of training examples and gradually adjusting the network parameters until it gives the classifications we want. The network typically consists of 10-30 stacked layers of artificial neurons. Each image is fed into the input layer, which then talks to the next layer, until eventually the ‘output’ layer is reached. The network’s ‘answer’ comes from this final output layer.”

Where does Deep Dream come in? In a sense, by reverse engineering the process. One of the ways Google’s engineers double check the efficacy of their image identification engine is by having the system output its vision of what a particular term “looks like,” or as the blog post from the Google research team says, “to turn the network upside down and ask it to enhance an input image in such a way as to elicit a particular interpretation.” Google Deep Dream allows users to input images that are then enhanced based on the Google image identification engine, using a user- or engine-defined abstraction layer to generate an output based on the vast data set of images Google has at its disposal.

Google Deep Dream, then, is in essence a very complex and interesting image filter. It utilizes processes developed for a far deeper purpose, and represents, in an oblique way, the cutting edge of current machine learning techniques. But in a fundamental sense, it’s a set of image filters – beautiful, frequently uncanny, and deeply interesting, but not what many people have taken it to be.

Some will, no doubt, take all of this for disrespect towards Google’s project. That’s not my intent. It’s a cool project that uses incredibly impressive technology to produce unique visuals. No, my beef is with how the current internet content economy has misled people about what this technology is and does. Many people would lay the blame for such misunderstanding on the feet of those readers. Hey, if the readers don’t get the story right, then that’s their fault, right? Not really. Readers have a responsibility to read skeptically, but we need to be realistic about the average time invested by the average reader on the average piece published on the internet. Whether we like it or not, we are living in an age of aggregation, where large amounts of information are distilled and disseminated, all day, every day. Under those conditions, writers have tremendous responsibility to make their output as accurate as possible.

So take Gizmodo, one of the most popular tech blogs in the world. Writers there referred to Deep Dream as Google’s “daydreams” not once, not twice, but three times. If these references were carefully explained as metaphors, it’d be alright, but in each post there are misleading descriptions of the software. Lines like “It’s official: Androids dream of creepy insectoid sheep” make misunderstanding inevitable. What’s frustrating is that, particularly in the comments, Gizmodo writers frequently demonstrate that they do understand the technology on a deeper level. They just keep the click-triggering copy near the top. Or take The Washington Post’s Jeff Guo. Guo does an effective, deep-dive into the technology. But early in his piece, he says that Deep Dream images “are hallucinations produced by a cluster of simulated neurons trained to identify objects in a picture. The researchers wanted to better understand how the neural network operates, so they asked it to use its imagination. To daydream a little.” This is precisely the type of language that leads to widespread misunderstanding. I recognize that metaphorical language and symbolism are often necessary to explain complex topics to the public. But in a world where we know most people read very little of the average article we click on, this kind of paragraph threatens to leave readers more confused rather than less.

Unfortunately, the incentives of today’s media economy have little to do with accuracy. With the collapse of paid classified advertisements that were traditionally the backbone of newspaper economics, and the general failure to ever implement direct monetization schemes to replace subscription and newsstand revenues, our newsgathering industries are now forced to rely almost exclusively on per-impression advertising. In order to hit page view, click, and impression mandates, writers must make their headlines as attractive to click as possible, particularly for users on social media like Facebook or Twitter. And what attracts clicks, unfortunately, is sensationalism and exaggeration – always dangerous, but particularly in regards to issues of such intricacy as artificial intelligence. Science and tech researchers, meanwhile, often have little incentive to correct exaggerated accounts of their work. After all, funding is hard to come by, and good publicity can go a long way to secure a project’s future. For for-profit entities, the desire for good publicity is even stronger. Publishers need clicks, researchers and companies need good pub, and the only people that suffer are the readers whom our media is supposed to inform.

These issues are hardly restricted to Google Deep Dream or AI. Take a post on Jalonpnik about Lexus’s new “hoverboard,” headlined “The Lexus Hoverboard Is Real And I Rode It.” The admission that the product in question requires a liquid nitrogen refueling, and can only operate on massively expensive pre-built courses, comes in the sixteenth paragraph. In other words, the hoverboard is real, except that it isn’t. Gizmodo’s Annalee Newitz wrote in August that Tesla’s Powerwall battery pack will “reinvent energy.” Not just household electrical consumption, mind you, but energy. A mighty bold claim for a novel energy storage solution that has barely been adopted by anyone at all and offers little advantage to many homeowners. TechCrunch’s Jay Samit claims that driving your car will be illegal by 2030, a statement of such grandiose, shit-eating delusion I have to admire it. Look beyond how self-impressed and confident Samit is, and you find a flagrant underestimation of the technological, infrastructural, economic, and legal challenges to self-driving cars, and, in extension, of human agency, of the random chance and luck that drive history, of the stunning political risks that any politicians would endure in enacting such a law, and of the fact that people really, really love their cars. No one can blame Samit for existing in a context where he is rewarded for being ridiculous rather than shamed.

What has to happen to our online economy to stop creating the incentives that make bad journalism like this happen? Under current conditions, there appears to be no way to ensure that the basic philosophy of journalism prevails: that the truth is good enough.

Freddie deBoer is an academic and writer. He lives in Indiana. Find him on Twitter at @freddiedeboer.

This piece originally appeared in the Full Stop Quarterly Issue #2. The Quarterly is available to download or subscribe here.

This post may contain affiliate links.