Telling Stories Through Stories: Intertextuality in Today’s Indie Lit

The images we see from other media become a part of a language that is deep and intimate.

Podcast #8: eteam & Turner Canty

A conversation between Turner Canty, a writer and musician based in Oakland, and Franziska Lamprecht and Hajoe Moderegger, better known as eteam.

Were they ancient abductees? Not exactly. The relation between the experiences described in the Hekhalot literature and those of modern abductees is subtler and more complex than that, with major differences that must be given full weight.

Full Stop Quarterly: Genre Expectations

This issue of the Full Stop Quarterly is about genre, its limits, and the liberatory potential we discover at the edges of generic forms.

Podcast #7: S. D. Chrostowska & Tamara Faith Berger

Novelist and critic S. D. Chrostowska discusses her new book, The Eyelid, with fellow novelist Tamara Faith Berger.

Sponsored: Top 10 Films with 420 in the Title

To celebrate today’s release of 4/20, the latest holiday film from the producers of Valentine’s Day, we present the top ten films with 420 in the title.

The following playlist is humbly submitted for your listening pleasure from Full Stop, your full service literary journal.

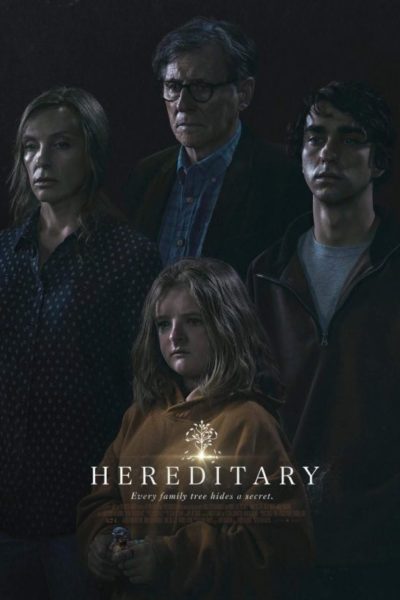

Prophetic Dreams of Chaos: Expected Acts of Mourning in Love and Death

There is something revealing when you decide to watch a horror movie alone on a weekday — especially in the wake of a tumultuous breakup.

Podcast #6: Amina Cain & Caren Beilin

Author Amina Cain talks with editor Caren Beilin about illness, health, medical gas-lighting, slowly unraveling, and more!

There’s a profusion, a tailback of vehicles near the water’s edge, in places and positions that cause trouble.